I spent most of last Tuesday staring at a flickering neon sign across from a coffee shop in Austin, Texas, wondering if I had finally become obsolete. My screen showed six different projects moving at a pace that would have killed a human department three years ago. There were no frantic Slack messages. No one was asking for approval on a brand style guide or complaining about a broken API integration. The work was just happening. This is the reality of the era we stumbled into, where the dream of autonomous teams shifted from a boardroom buzzword into a quiet, almost unsettling daily reality.

Managing used to be about friction. You were the grease in the gears, the person who made sure Person A talked to Person B so that Task C didn’t fall through the cracks. Now, the gears have learned to grease themselves. We are presiding over systems that think, pivot, and execute with a level of coordination that makes the old “Agile” stand-ups look like a slow-motion car crash. It forces a hard question upon anyone with a director title: if the team doesn’t need you to manage their output, what exactly are you doing in that expensive chair?

The shift toward this hands-off architecture isn’t about laziness. It is about the realization that human bottlenecks are the most expensive part of any modern enterprise. We’ve reached a point where the speed of silicon-based execution has finally outrun the speed of human consensus. If I have to step in to mediate a dispute between two autonomous agents optimizing a supply chain, I’ve already lost the competitive advantage of the system.

Rethinking the human element in business automation

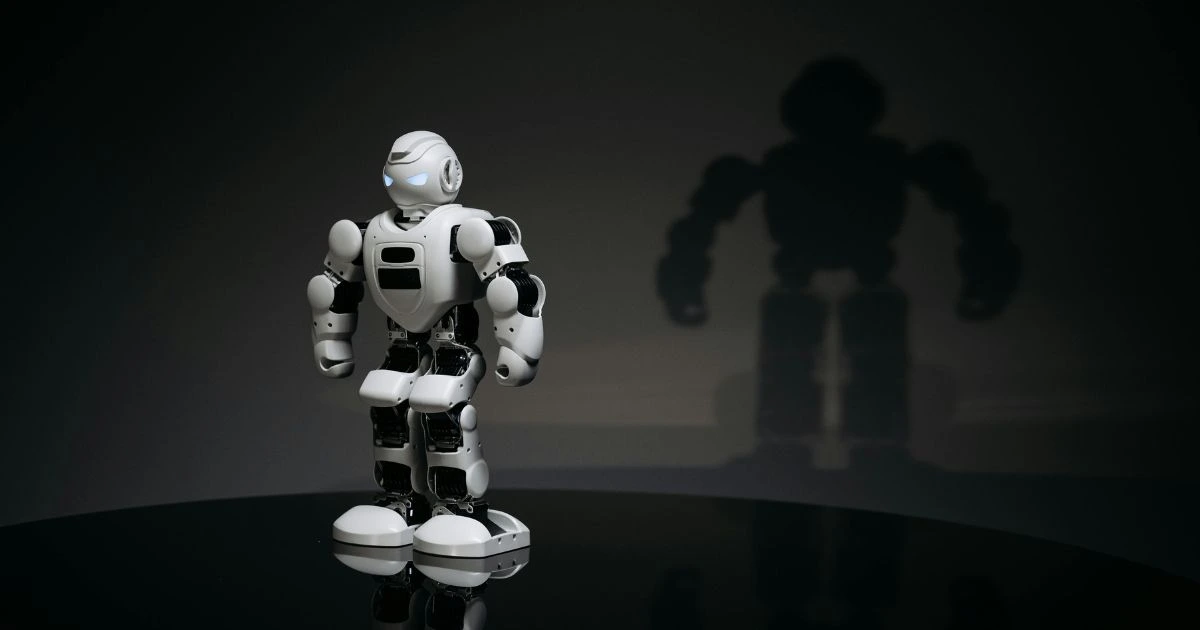

We used to think automation was about robots on a factory floor or scripts that moved data from one spreadsheet to another. That was the old world. Modern business automation is more like a biological system. It grows, it adapts, and occasionally, it hallucinates in ways that are strangely creative. The leadership required here isn’t about giving orders; it is about setting the initial conditions and then having the stomach to stay out of the way.

I’ve noticed that the managers who are drowning right now are the ones trying to micromanage the logic. They want to see the “why” behind every microscopic decision an autonomous agent makes. They treat the system like a junior employee who needs constant hand-holding. But these systems don’t work like that. They operate on high-level intent. If you give them a vague goal, they will find the most efficient path, which is often a path a human brain would never consider because we are burdened by “the way we’ve always done it.”

There is a certain grief in letting go of the control panel. I remember the first time I realized my primary marketing agent had killed a campaign I loved because the real-time conversion data suggested it was a vanity project. It was right, of course. My ego wanted the campaign to live, but the autonomous structure was focused on the objective reality of the market. Leading in this environment means becoming a curator of goals rather than a supervisor of tasks. You are more like a gardener than a general. You prepare the soil, you ensure there is enough light, and you let the growth happen on its own terms.

The tension comes when the system does something unexpected. Last month, one of my autonomous units decided to pivot an entire product pitch because it detected a shift in sentiment on a niche forum that hadn’t even hit the mainstream news yet. A year ago, I would have stopped that. I would have demanded a committee meeting and three rounds of revisions. This time, I just watched. By the time I finished my second cup of coffee, the new strategy had already generated a lead that turned into our biggest contract of the quarter. It makes you feel small, but in a way that is oddly liberating.

The quiet evolution of AI leadership

If you look at the successful firms right now, you won’t see leaders who are technical geniuses. You see leaders who are masters of context. AI leadership in 2026 is about the ability to translate human values and long-term vision into parameters that an autonomous system can respect. It is a linguistic challenge as much as a strategic one. If you can’t define what “ethical growth” or “brand integrity” means in a way that survives the cold logic of an algorithm, your team will eventually drift into places you don’t want to go.

There is no manual for this. Most of the books written on leadership in the 2010s are essentially decorative objects now. They talk about empathy and motivation, which are still vital for the humans left in the room, but they say nothing about how to maintain a culture when 80 percent of your “staff” doesn’t have a pulse. The challenge is keeping the human soul of a company alive when the execution is entirely automated. You have to find a way to inject “taste” into the machine. Taste is the one thing these systems still struggle to replicate. They can be perfect, but they can’t always be cool, or weird, or profoundly moving. That is where we still live.

I’ve found that my job has moved from “how” to “should.” Should we enter this market? Should we prioritize this demographic even if the data says it’s less profitable in the short term? These are the questions that keep the lights on in a world of autonomous teams. The machines provide the answers to “how,” but the “should” remains a stubbornly human burden. It’s a heavy one, too. When the execution is flawless, any failure in the end result rests entirely on the quality of your intent. You can no longer blame poor implementation. If the ship hits an iceberg, it’s because you told it to sail toward the ice.

We are seeing a thinning out of middle management across the United States, from the tech hubs in San Francisco to the financial centers in New York. The people who survive are those who can sit in the silence of a self-managing office and not feel the need to create busywork just to feel important. It requires a level of psychological security that many high-achievers simply don’t possess. We are addicted to being needed. We love the “fire drill.” But in a high-functioning autonomous environment, there are no fires, only anomalies to be analyzed.

The silence of a modern office is the sound of efficiency, but it can also be the sound of isolation. I sometimes miss the chaos. I miss the messy, loud, inefficient debates over a whiteboard. There was a humanity in the friction that we’ve polished away. Now, when I walk through the office, I see people focused on high-level strategy and creative leaps, while the heavy lifting of the business happens in the background, invisible and relentless. It is a cleaner way to work, but it demands a different kind of stamina.

As we move further into this decade, the boundary between the leader and the system will continue to blur. We aren’t just using tools anymore; we are operating within a partnership where the “team” is a hybrid of carbon and silicon. It’s not a takeover; it’s an integration. The people who will lead the most successful organizations won’t be the ones with the best code or the most data. They will be the ones who can maintain their humanity in a world that no longer requires it for the day-to-day grind.

The neon sign across the street finally flickered out. My dashboard shows that three more projects have been completed since I started writing this. The world is moving on, with or without my permission. The only thing left to do is decide what kind of “boss” I want to be in a world that doesn’t really need a boss at all. It’s a strange, quiet frontier. I think I’ll stay a while and see where it goes.

FAQ

It is a group of AI agents and human specialists where the agents handle the bulk of execution, coordination, and optimization without direct human oversight.

The boss becomes the “Chief Vision Officer.” They are the soul of the machine, even if they aren’t turning the gears.

Identify the most repetitive coordination task in your workflow and find an agentic tool to replace the human “middleman” in that specific process.

Yes, they are actually better at it because they can monitor every transaction against a database of laws in real-time.

Success is measured by the delta between the goal set and the outcome achieved, with minimal human “intervention hours” as a key efficiency metric.

Often no. When the coordination is automated, the physical location of the human “curators” becomes irrelevant.

By setting up multi-agent “adversarial” systems where one AI checks the work and logic of another.

Much of the day is spent monitoring high-level KPIs, refining the “intent” of the systems, and focusing on long-term relationships that AI can’t handle.

It started in tech and finance, but it is rapidly spreading to logistics, healthcare administration, and even local government.

They use high-speed data protocols and shared context windows, far faster and more accurately than human meetings.

Empathy remains crucial for the human members of the team who may feel alienated or pressured by the speed of the automated systems.

Not exactly. It means the nature of management shifts from task supervision to high-level goal setting and ethical oversight.

It levels the playing field. A three-person team in a garage can now have the operational capacity of a Fortune 500 company.

No, you need to learn how to communicate. The interface is increasingly natural language, not syntax.

The risk is “drift”—where the system optimizes for a metric (like profit) so aggressively that it violates unstated human values or long-term brand health.

They can execute creative tasks based on patterns, but they still rely on humans for the “spark” or the final judgment on what feels “right.”

Culture becomes about the shared vision and the specific “taste” that humans inject into the automated output.

It leads to “role migration.” Routine coordination and middle-management tasks are disappearing, while roles focused on strategy and “taste” are expanding.

The leader is responsible. Because the execution is automated, the “mistake” usually lies in the original parameters or the lack of sufficient guardrails.

It’s increasingly about “intent-shaping”—the ability to communicate complex human values and goals into prompts and constraints the system understands.

Traditional software follows rigid “if-then” rules; modern automation learns from data and can pivot strategies in real-time without being told.